Virtualization versus Containers: Is there a clear winner? Does it really matter?

Will virtual machines disappear? No. Not anytime soon.

This implies that the one day, container technology will eventually replace traditional virtual machines. So, I will save you from reading this entire piece to reach the conclusion of there being a clear winner. The answer is: no. There isn’t a clear winner primarily because both technologies are not one and the same. Each boasts their own respective features and functions and each solve their own set of problems. Understanding the problems in which each solves will better prepare you from misusing the technology.

Virtual Machines

So, what is a virtual machine? Nowadays, hardware virtualization is quite commonplace in the computing industry. It is a technology that has also become more available to end users as well. The technology was originally developed to make better use of physical servers by enabling the over-provisioning of resources and, in turn, re-using them at the end of the virtual server lifecycle. Why invest in allocating more server hardware and not utilize it to its full potential, when instead you can consolidate it all onto one or a few servers and share their resources? The costs to acquire new hardware, energy consumption and management are reduced significantly.

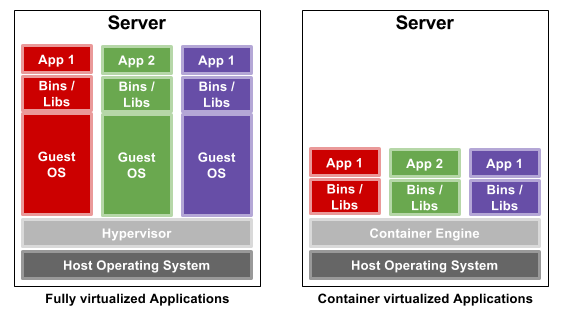

You have a piece of hardware emulation software called the Hypervisor. The Hypervisor rests on top of a host operating system installed on the physical machine. This host OS could be Linux or Windows or anything else. The Hypervisor manages one or multiple virtualized operating systems which are often referred to as a virtual machine (or VM). This Hypervisor also does all the translation of your virtual hardware defined in your virtual machines into physical hardware connected to the underlying host. When plugged into a much larger ecosystem, these virtual resources are managed from a set of standardized APIs and from a centralized location.

Why virtualize the data center? There are many advantages to this approach. They include:

- Automated provisioning and deployment, without the need to assign someone to stand up a new physical machine or reconfigure an existing one.

- Scalable from one to thousands of virtual instances, managed under a single framework.

- Enables resource and power management through the consolidation of physical hardware.

- Guaranteed Quality of Service (QoS)—that is, you can monitor, throttle and redirect traffic as needed.

To put things into perspective, it would be simpler for us to travel back in time for a bit, maybe about 10 years. If you needed to provision X amount of servers with Y amount of memory and Z amount of storage, all collocated in a data center nowhere near you, it was necessary for someone at the other end of that request to physically enable and configure that request in that one data center. And if your request spanned across multiple data center locations, then that request would multiply the number of technicians required to handle the same amount of work (each in its own respective location).

You would need to open up a support ticket for all new requests or to handle new issues or failures. Sometimes these tickets could take hours or days to resolve the problem or complete the request. The urgency to completion would also probably depend on the package you are subscribed to with that data center.

This is not the case with a virtual environment. To stand up a new [virtual] node, could take as little as seconds. It does not matter if you are provisioning one or hundreds of virtual servers, all simultaneously. Compare that to hours or days for just a handful of physical machines. And if the physical machine hosting that virtual instance were to catastrophically fail, then, your same virtual instance would be spun up from another physical machine.

Consumer demands and requirements continue to increase, exponentially. With quicker and more automated deployment, both IT and DevOps teams are able to instantly deliver to meet those demands.

Hardware virtualization forms the foundation of modern technologies, including Cloud Computing which enables companies, service providers and individuals to dynamically provision the appropriate amount of computing resources (i.e. compute nodes, block or object storage, et al) for their application needs.

Containers

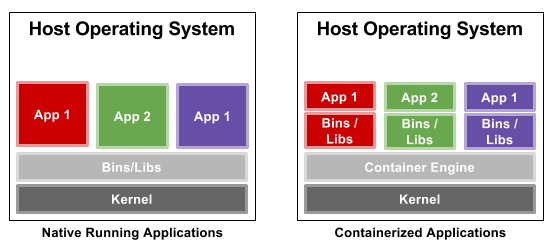

Containers are about as close to bare metal as you can get when running “virtual machines” (they may be called virtual machines but virtual machines they are not). They impose very little to no overhead. For instance, if we were to shift our focus on Linux, introduced in 2008, LXC adopted much of its functionality from the Solaris Containers (or Solaris Zones) and FreeBSD jails that preceded it. Instead of creating a full-fledged virtual machine, LXC enables a virtual environment with its own process and network space. Using namespaces to enforce process isolation and leveraging the kernel’s very own control groups (cgroups) functionality, the feature limits, accounts for and isolates CPU, memory, disk I/O and network usage of one or more processes. Think of this userspace framework as a very advanced form of chroot.

Note: LXC uses namespaces to enforce process isolation, alongside the kernel’s very own cgroups to account for and limit CPU, memory, disk I/O and network usage across one or more processes. Also, other container technologies exist which include the very popular Docker.

But what exactly are containers? The short answer is that containers decouple software applications from the operating system, giving users a clean and minimal Linux environment while running everything else in one or more isolated “containers”. The purpose of a container is to launch a limited set of applications or services (often referred to as microservices) and have them run within a self-contained sandboxed environment.

This isolation prevents processes running within a given container from monitoring or affecting processes running in another container. Also, these containerized services do not influence or disturb the host machine. The idea of being able to consolidate many services scattered across multiple physical servers into one is one of the many reasons data centers have chosen to adopt the technology.

Container features include the following:

- Security: network services can be run in a container, which limits the damage caused by a security breach or violation. An intruder who successfully exploits a security hole on one of the applications running in that container is restricted to the set of actions possible within that container.

- Isolation: containers allow the deployment of one or more applications on the same physical machine, even if those applications must operate under different domains, each requiring exclusive access to its respective resources. For instance, multiple applications running in different containers can bind to the same physical network interface by using distinct IP addresses associated with each container.

- Virtualization and transparency: containers provide the system with a virtualized environment that can hide or limit the visibility of the physical devices or system’s configuration underneath it. The general principle behind a container is to avoid changing the environment in which applications are running with the exception of addressing security or isolation issues.

What is the Difference

Container technologies abstract themselves from the main system they run on. They don’t care or need to know about the underlying hardware (or kernel). And in some instances, they rarely if ever touch such low level components. Containers are designed for single purpose computing, such as hosting this one application or to enable two functions while a virtual machine serves as a complete hosting environment. The technologies are not one and the same and they both serve different needs.

While there is a lot of buzz and hype surrounding container technologies today, rest assured, they will not be supplanting hardware virtualization. Once the dust settles and users realize the problems each solve, the much needed love for VMs will eventually return.

![Random [Tech] Stuff](https://koutoupis.com/wp-content/uploads/2022/01/koutoupis-logo-3.png)

The author ‘Petros’ is incorrect in stating that ‘containers’ were introduced in year 2008 as LXC. This might apply if referring specifically to the word container, but even then Oracle Solaris offered containers and FreeBSD offered “jails” – both of which work exactly like LXC LONG before the stated Linux technology. I personally deployed FreeBSD Jails in 2006.

It is absolutely critical that information on introduction of newer technologies be stated factually, since many if not most of those just now entering the technological field will be grossly misinformed by all the hype and rhetoric going to Linux containers only.

While you are correct with your history, I was referring to the creation of LXC and not the general Containers with the date. And in the same sentence, I state that both Solaris Containers and FreeBSD jails preceded it. But nonetheless, I see now that I should rewrite part of that paragraph. Thank you.